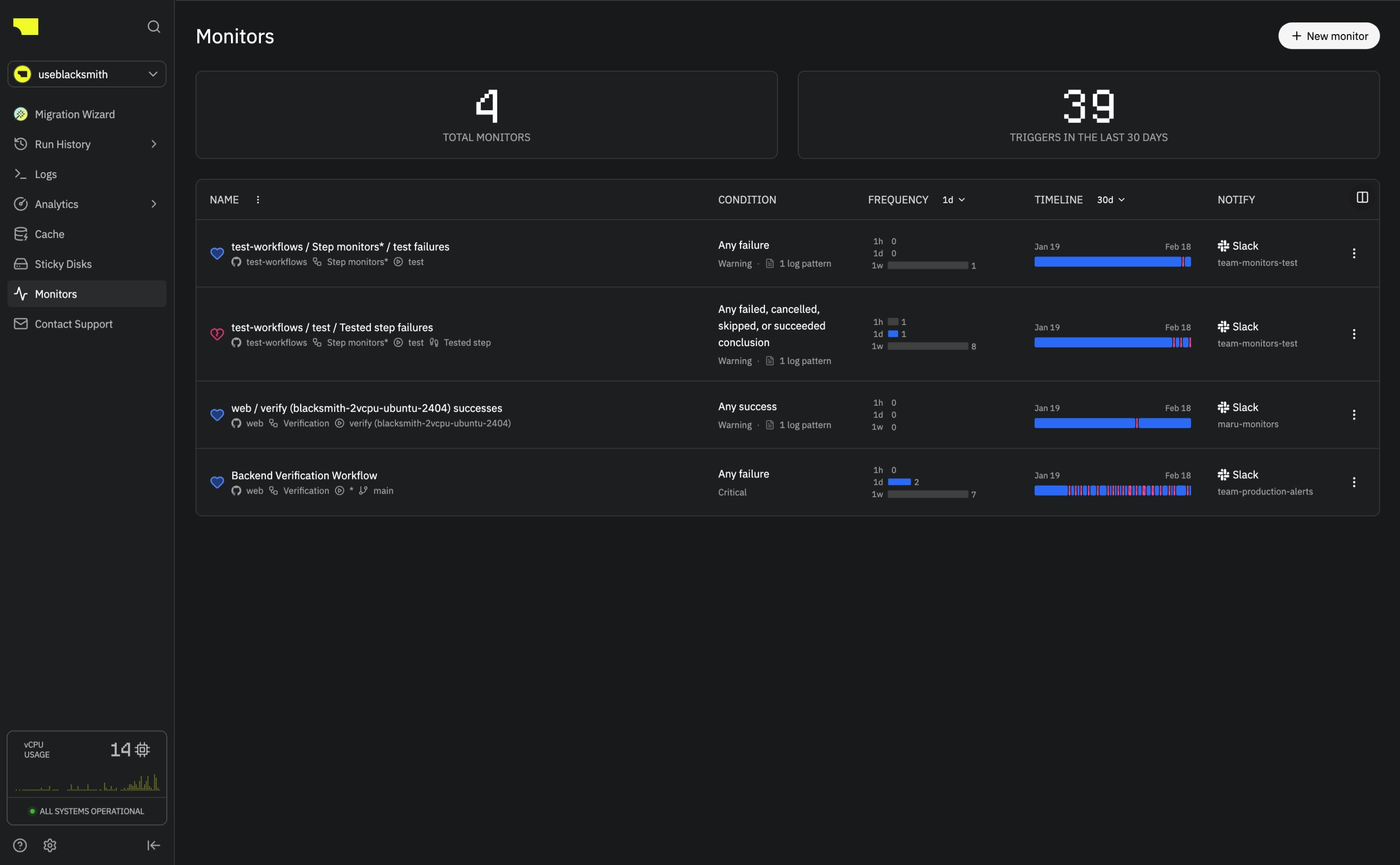

Overview

Monitors watch your GitHub Actions workflows and alert your team in Slack when something goes wrong. A monitor might be “alert me when this job fails 3 times in a row on main” or “notify me whenever this step is skipped.” You can also turn on VM retention, which keeps the runner VM alive when an alert fires so you can SSH in and see exactly what happened. The only setup is connecting your Slack workspace.

Basics

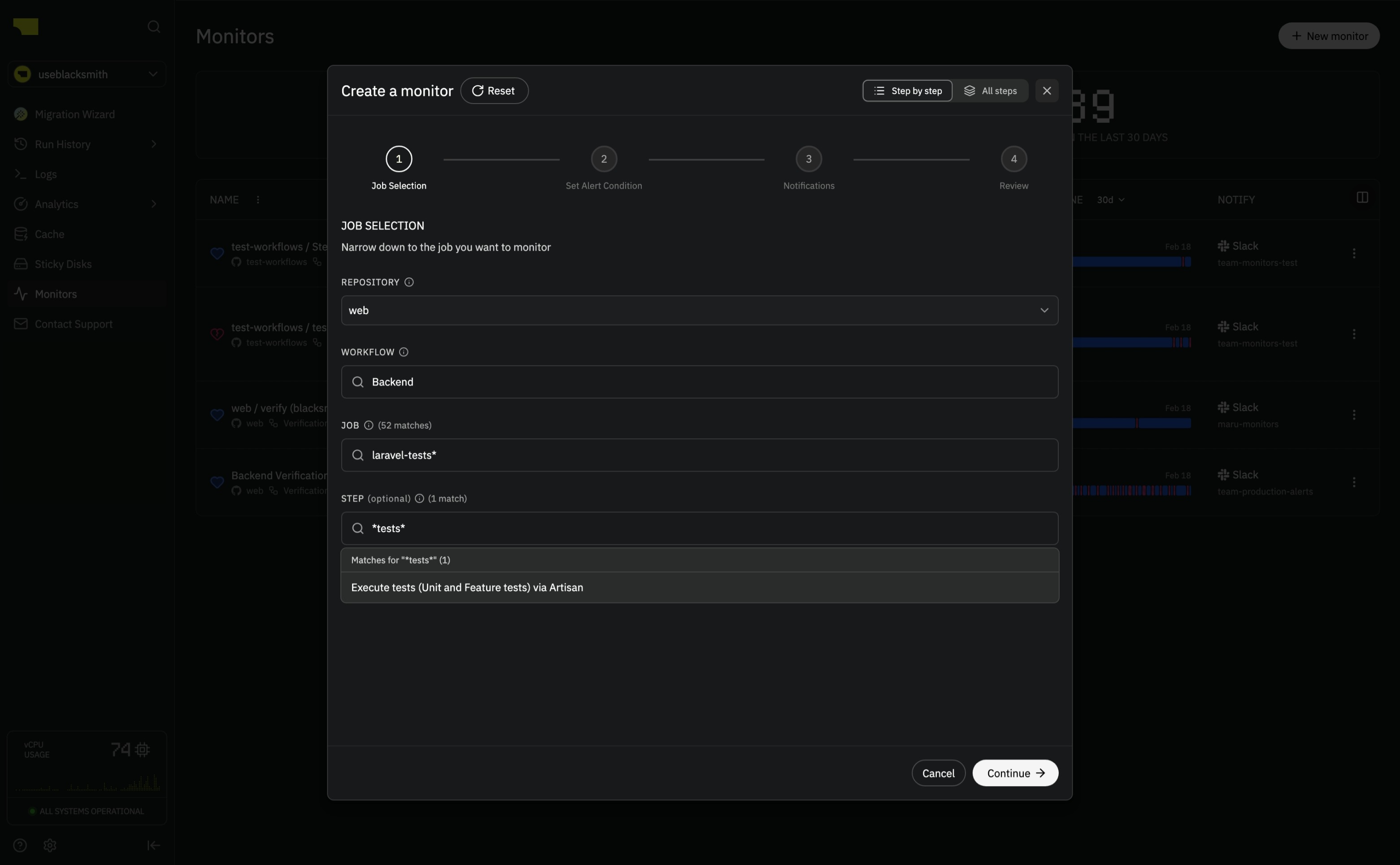

Creating a monitor

Go to Monitors in the sidebar and click New Monitor. The wizard has four steps:- Pick the repository, workflow, job, and optionally a step to watch

- Set the alert condition: single event or consecutive events, which conclusions to match, severity, cooldown, and optional log pattern filters or VM retention

- Choose Slack channel(s) for notifications

- Review and create

Job selection

Pick what the monitor watches:- Repository - which repo to watch

- Workflow - which workflow(s)

- Job name - which job(s) within a workflow

- Step name - watch a specific step within a job

- Branch - only alert on events from certain branches

- Exclude branch - skip events from specific branches (e.g., staging or dependabot)

| Pattern | Matches |

|---|---|

* | Everything |

deploy* | Anything starting with “deploy” |

*-test | Anything ending with “-test” |

build | Exact match only |

Alert condition

Alert after

- Single event - alert each time a matching conclusion occurs

- Consecutive events - only alert after N matching conclusions in a row, which cuts noise from flaky jobs

Cooldown

Monitors have a cooldown period (default 60 minutes) to prevent alert spam. After an alert fires, subsequent matches are suppressed until the cooldown expires. Resolving a monitor resets the cooldown immediately.Log pattern filters

You can require that job or step logs match specific patterns before an alert triggers. Patterns use RE2 regex syntax with an optional case-sensitivity toggle. Up to 10 patterns per monitor. Useful when you only care about specific errors (e.g.,OutOfMemory, SIGKILL) rather than all failures.

Notifications

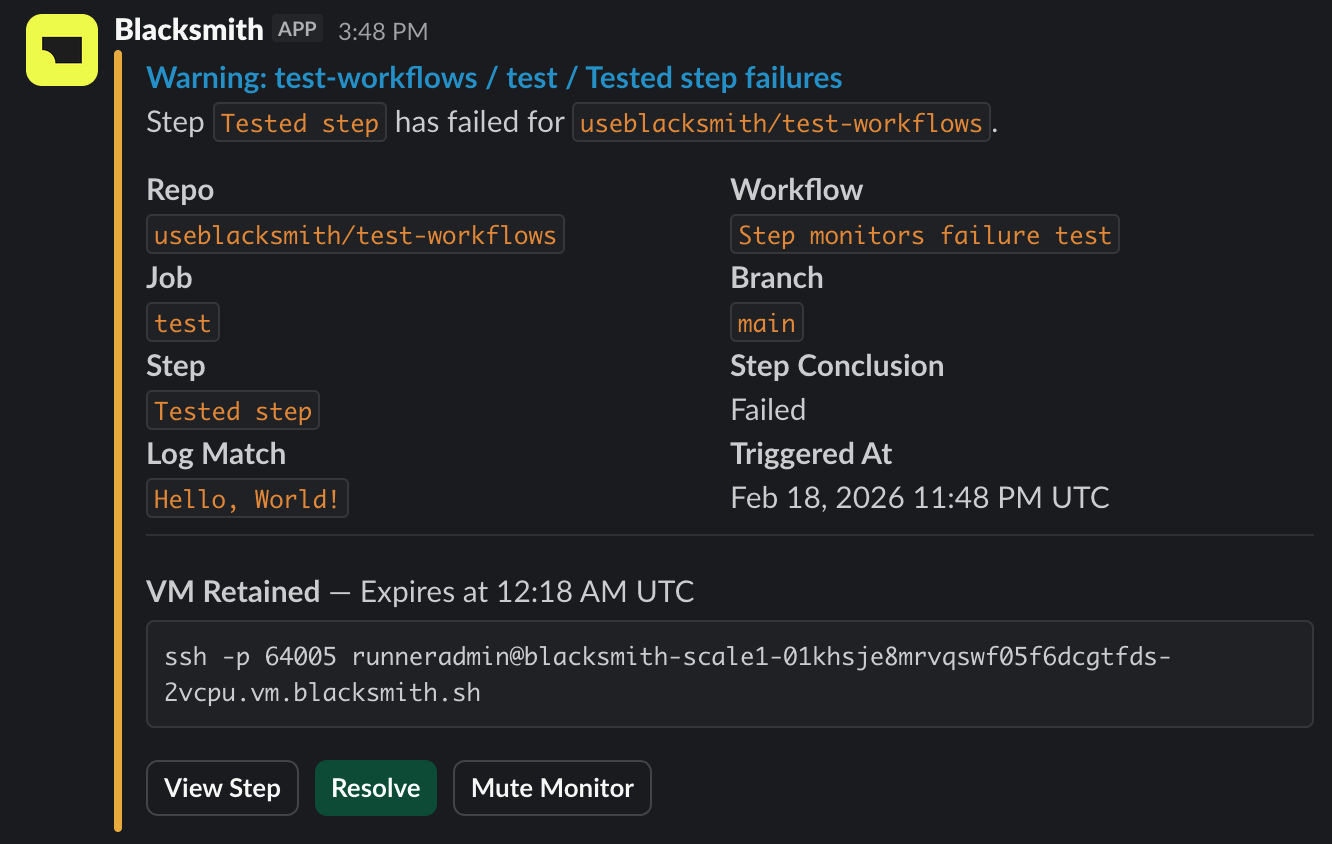

Slack alerts

When a monitor fires, Blacksmith posts to your configured Slack channel(s) with:- Severity badge and monitor name (links to the monitor detail page)

- Repository, workflow, job, and branch

- Conclusion and timestamp

- A View Job/Step button linking to GitHub Actions

- A Resolve button that clears the alert state and resets the cooldown

- A Mute button to mute the monitor from Slack

Clicking a Slack button requires your Slack account to be linked to Blacksmith. If it isn’t, you’ll be prompted to connect it from your settings page.

Managing monitors

Muting

Mute a monitor to suppress alerts and VM retentions without deleting it. You can mute from the dashboard or the Slack alert, and unmute from the dashboard.Resolving

Resolve a monitor to clear its alert state and reset the cooldown. You can resolve from the dashboard or the Slack alert.Editing and deleting

All monitor settings (filters, conditions, notifications, retention) can be edited from the dashboard. Delete a monitor from its action menu.VM retention

Monitors can keep VMs alive when an alert fires so you can SSH in and debug. The Slack alert includes an SSH command you can paste directly into your terminal, e.g.ssh -p 2222 [email protected].

Job-level retention keeps the VM alive for up to 8 hours after the job completes. To end retention early, go to the monitor’s page or run the following while SSH’d in:

Linux:

VM retention requires a cooldown of at least 60 minutes. Step-level monitors do not support VM retention on cancelled or skipped steps.

Severity levels

Pick a severity when creating a monitor:- Critical - the job is broken and someone needs to look now

- Warning - something failed and should be investigated soon

- Info - worth knowing about, not necessarily urgent

Permissions

- Organization admins can create and manage monitors for any repository

- Users with write access can create monitors for repositories they can access

- All organization members can view monitors for repositories they can access

Pricing

Monitors are free. VM retention billing depends on the type:- Job-level retention keeps the VM alive after the job completes. Since the VM outlives the job, retention time is billed at the same rate as runner minutes.

- Step-level retention pauses the job at the matched step while the job is still running. Retention time is billed as part of the job’s normal runner minutes.

FAQ

How do I connect Slack?

How do I connect Slack?

Go to Settings > Integrations and click Link Account. Once connected, you can pick Slack channels when creating monitors.

Can I monitor specific steps within a job?

Can I monitor specific steps within a job?

Yes. Add a step name filter when creating a monitor. You can use exact matches or glob patterns (e.g.,

Run tests*).How do I avoid alert fatigue?

How do I avoid alert fatigue?

A few things that help:

- Use consecutive events to only alert after N failures in a row

- Set a longer cooldown to limit how often a monitor can fire

- Mute monitors during known maintenance windows

- Narrow monitors to specific workflows, jobs, and branches

- Add log pattern filters to only trigger on specific errors

How do I debug a failed job after it completes?

How do I debug a failed job after it completes?

Enable VM retention on your monitor. When it fires, the VM stays alive and the Slack alert includes an SSH command.

How can I get help with monitors?

How can I get help with monitors?

Open a support ticket.